Using Temperature with Top_p Sampling

Harness the powerful capabilities of OpenAI’s GPT-4 through an in-depth understanding of the temperature and top_p sampling parameters. These controls are instrumental in tailoring text generation behavior across various domains such as code generation, creative writing, chatbot responses, and beyond. Let’s dissect these parameters to master the art of text generation!

Parameter Playbook: Tuning Temperature and Top_p for 7 Different Scenarios

For beginners, it’s vital to grasp how Top_p parameters can be impact the output from GPT. Here are 7 examples of output from GPT with varying Top_p and Temperature settings.

| Use Case | Temperature | Top_p | Description | Example Output |

| Code Generation | 0.2 | 0.1 | Generates code adhering to established patterns and conventions. Output is deterministic and focused. | … adhere strictly to the established coding standards, ensuring robustness and maintainability. |

| Data Analysis Scripting | 0.2 | 0.1 | Generates correct and efficient data analysis scripts. Output is deterministic and focused. | … efficiently process large datasets, extracting meaningful insights and trends from the raw data. |

| Code Comment Generation | 0.3 | 0.2 | Produces concise and relevant code comments. Output is deterministic and follows conventions. | … be well-commented, ensuring that every function and variable was clearly explained for future reference. |

| Chatbot Responses | 0.5 | 0.5 | Crafts conversational responses balancing coherence and diversity. Output is natural and engaging. | … enable interactive dialogues between the user and the virtual world, making each interaction feel real and engaging. |

| Exploratory Code Writing | 0.6 | 0.7 | Generates code exploring alternative solutions and creative approaches. Output is less pattern-constrained. | … explore novel algorithms to solve complex problems, paving new pathways in the realm of computational creativity. |

| Creative Writing | 0.7 | 0.8 | Fosters creative and diverse text for storytelling. Output is exploratory and less pattern-constrained. | … unfold a narrative of a digital realm where each algorithm brought characters to life in a whimsical tale of adventure. |

| Extreme Creativity | 2.0 | 1.0 | Engenders highly random and often nonsensical output. Unleashes extreme creativity at the cost of coherence. | … transform the syntax trees into a garden of recursive tendrils, where each node blossomed into arrays of poetic expressions. |

Delving into Temperature

Temperature can be envisioned as the creativity dial for GPT-3. A higher temperature value (e.g., 0.7) induces a more diverse and creative output, while a lower value (e.g., 0.2) ushers a more deterministic and focused output. Essentially, temperature influences the probability distribution over the possible tokens at each step of the generation process. A temperature of 0 would make the model entirely deterministic, always opting for the most likely token.

Temperature in Practice

Let’s consider a practical scenario where you’re generating a story based on a given prompt. A higher temperature might yield a more whimsical, unexpected narrative, while a lower temperature might produce a more coherent, predictable storyline.

import openai

# Set up your API key

openai.api_key = 'your-api-key'

# High temperature example

response = openai.Completion.create(engine="davinci", prompt="Once upon a time,", temperature=0.7)

print(response['choices'][0]['text'])

# Output: unpredictable narrative with diverse expressions.

# Low temperature example

response = openai.Completion.create(engine="davinci", prompt="Once upon a time,", temperature=0.2)

print(response['choices'][0]['text'])

# Output: predictable narrative following a linear storyline.

Unfolding Top_p Sampling (Nucleus Sampling)

Top_p sampling, also known as nucleus sampling, adopts a different route. It considers only a subset of tokens (the nucleus) whose cumulative probability mass reaches a certain threshold (top_p). For instance, with top_p set to 0.1, GPT-3 will consider only the tokens that make up the top 10% of the probability mass for the next token, allowing for a dynamic vocabulary selection based on context.

Top_p Sampling Insights from the Community

The OpenAI Community has shared invaluable insights on mastering top_p. It’s often observed that a lower top_p value like 0.1 yields more focused and coherent text, while higher values like 0.9 allow for a broader vocabulary and more creative text generation.

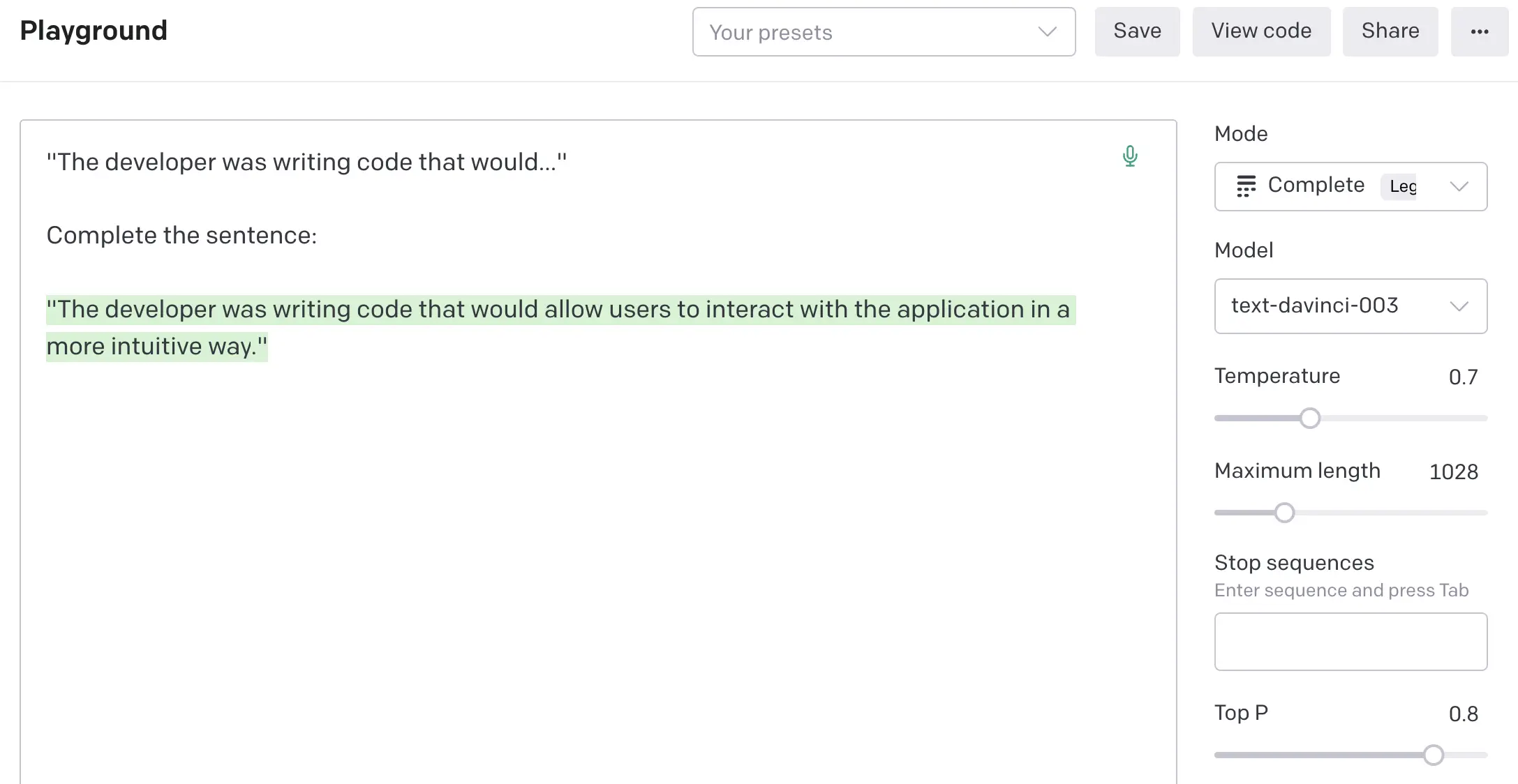

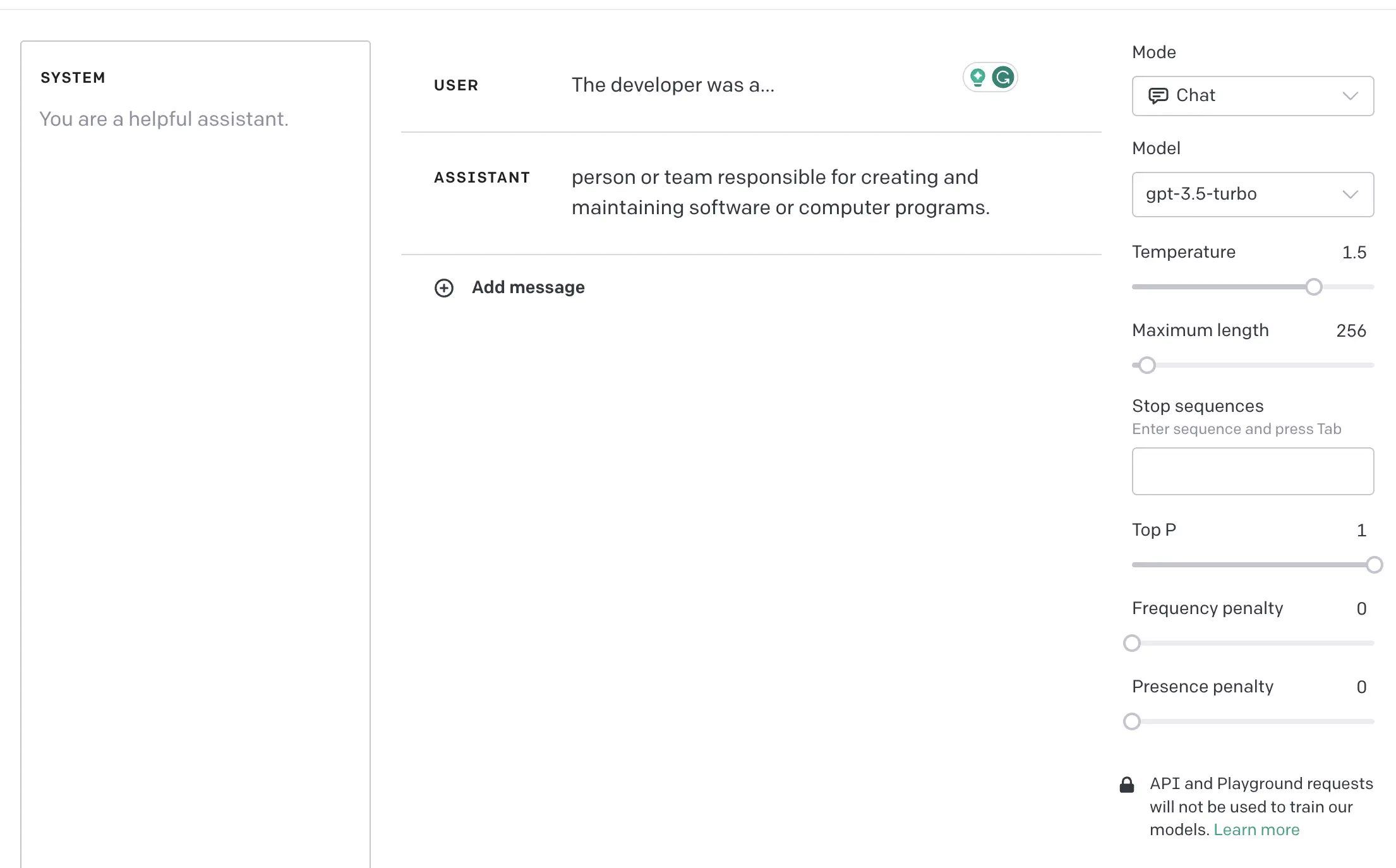

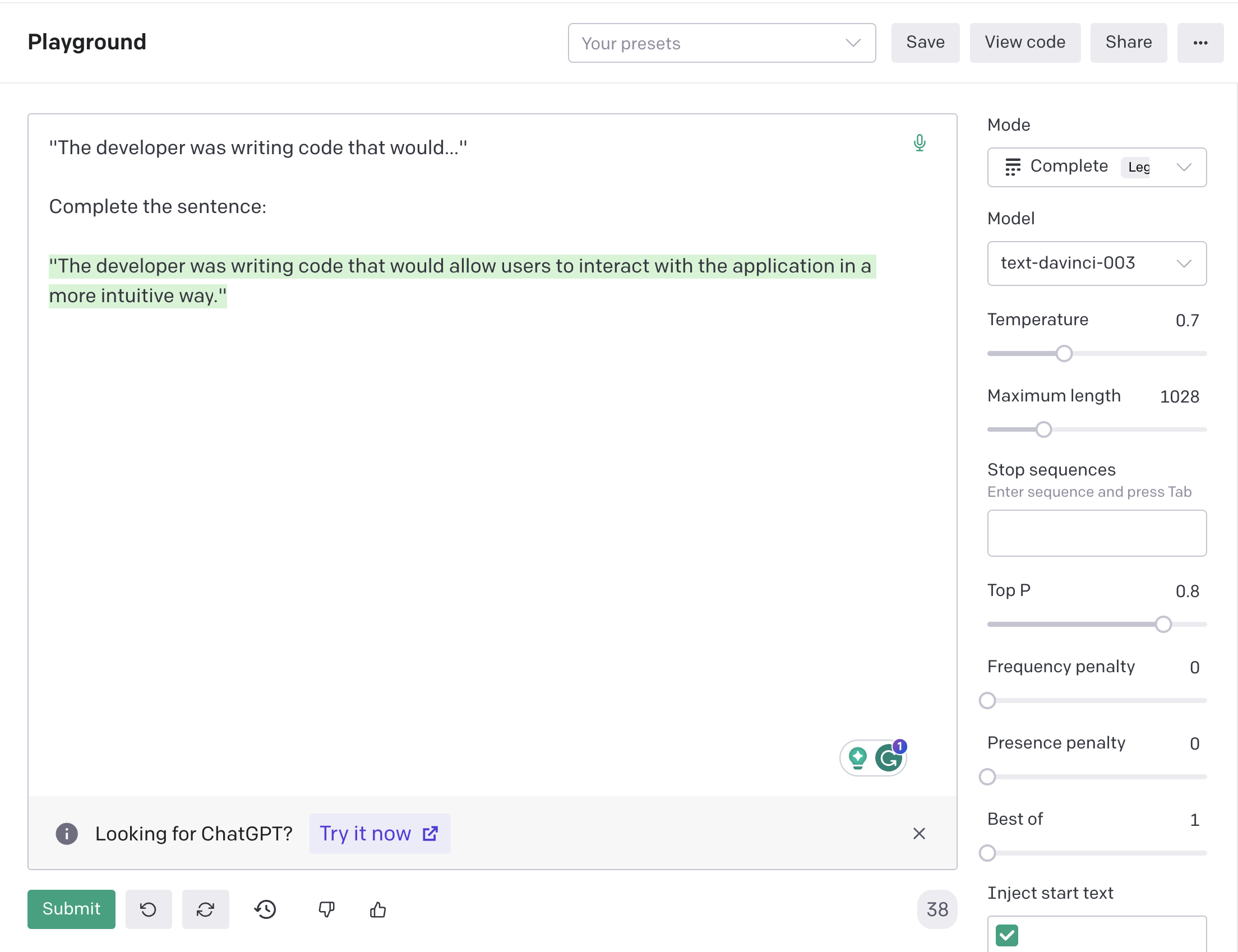

Experiencing Top_p in the OpenAI Playground

The OpenAI Playground is a fantastic sandbox to experiment with the top_p parameter. Here, you can adjust the top_p value and observe how the generated text morphs in real time, catering to different levels of creativity and determinism.

- Navigate to the OpenAI Playground.

- Select the GPT-3 engine from the available options.

- In the parameters section, you’ll find a field for top_p. Adjust this value and hit the Submit button to see how it influences the generated text.

- Experiment with different values top_p and observe how the narrowed or expanded token selection impacts the output.

import openai

# Set up your API key

openai.api_key = 'your-api-key'

# Top_p sampling example

response = openai.Completion.create(engine="davinci", prompt="Once upon a time,", top_p=0.1)

print(response['choices'][0]['text'])

# Output: there lived a king who...Implementing Top_p with the OpenAI API

Utilizing top_p sampling in your projects via the OpenAI API is a straightforward endeavour. Here’s how you can integrate top_p sampling in a Python script:

Additional Resources and Further Reading

With this extensive guide, you are now equipped to venture into the realm of controlled text generation with OpenAI’s GPT-3. The temperature and top_p parameters are your navigator and compass in this exciting journey, enabling you to steer the text generation to align with your desired outcomes. Happy text generating!

What is the difference between top_p and temperature?

Top_p sampling, also known as nucleus sampling, is a method used in natural language processing to generate more diverse and creative text. It works by selecting a subset (the "nucleus") of the next-word probability distribution, from which the next word is sampled. The subset is chosen such that the total probability of all words within it is greater than or equal to a specified threshold (the "p" in "top_p").

What is the difference between top_p and temperature?

Temperature sampling is another method used to control the randomness of text generation. A higher temperature value results in more randomness and creativity, while a lower value makes the output more deterministic and focused on the most likely next words. In contrast, top_p sampling focuses on a subset of likely next words, regardless of their individual probabilities, which can result in more diverse and unpredictable outputs.

Can top_p and temperature be used together?

Yes, top_p and temperature can be used together. Temperature can be used to control the overall randomness of the generated text, while top_p can be used to ensure a certain level of diversity and unpredictability. Using both together can help balance between randomness and determinism in the output.

What is the range of values for top_p?

The range of values for top_p is between 0 and 1. A value of 0.5, for example, means that the chosen subset of words will have a cumulative probability of at least 0.5. A lower value of top_p will result in a smaller, more focused subset, while a higher value will result in a larger, more diverse subset.

How does top_p affect the randomness and creativity of the generated text?

Top_p affects the randomness and creativity of the generated text by controlling the diversity of the words that can be chosen as the next word. A higher top_p value allows for a wider range of possible next words, which can result in more creative and unpredictable outputs. Conversely, a lower top_p value restricts the pool of possible next words, making the output more predictable and less diverse.