Italy’s data regulator, Garante per la Protezione dei Dati Personali, has demanded that OpenAI cease using the personal information of millions of Italians in its ChatGPT training data, claiming the AI company lacks the legal right to do so. In response, OpenAI has blocked Italian users from accessing its chatbot while it addresses the regulator’s concerns. This action marks the first taken against ChatGPT by a Western regulator and could potentially set a precedent for similar decisions across Europe. The case highlights growing privacy concerns around the development of generative AI models, which often use vast amounts of internet data for training purposes.

Italian Data Regulator Orders OpenAI to Stop Using Personal Information in ChatGPT

OpenAI, the creator of the large language model, GPT-3, is facing a temporary emergency decision from Italy’s data regulator to stop using the personal information of millions of Italians included in its training data. Garante per la Protezione dei Dati Personali, the regulator, stated that OpenAI does not have the legal right to use people’s personal information in ChatGPT Privacy. OpenAI has suspended access to its chatbot in Italy while it responds to the officials’ investigation. The move highlights the privacy tensions around creating giant generative AI models, which are often trained on vast swathes of internet data.

Possible Similar Decisions Across Europe

The Italian regulator’s action is the first taken against ChatGPT by a Western regulator, and it might prompt similar decisions all across Europe. France, Germany, and Ireland’s data regulators have contacted the Garante for more details on its findings since the Italian announcement. Norway’s data protection authority is monitoring developments, and its head of international, Tobias Judin, said that if the model is built on data that may be unlawfully collected, it raises questions about whether anyone can use the tools legally.

Scrutiny on Large AI Models Increasing

The Italian decision comes at a time when the scrutiny of large AI models is steadily increasing. Since March 29, tech leaders have called for a pause on the development of systems like ChatGPT, fearing their future implications. Judin says the Italian decision highlights more immediate concerns. If the model is developed by scraping the internet for whatever information is available, then a significant issue could arise. Essentially, AI development to date may potentially have a massive shortcoming, Judin adds.

How to Improve the Privacy of Your ChatGPT Prompts

ChatGPT, the powerful natural language processing AI developed by OpenAI, has come under scrutiny from data regulators in Europe due to concerns about its use of personal information. While the AI has already taken steps to address these concerns, there are additional measures that can be taken to improve its privacy.

One potential solution is to encode chat prompts in Base64 and have ChatGPT decode them before providing a response. By doing so, the AI would be prevented from accessing any personally identifiable information contained within the prompts, as they would be scrambled beyond recognition.

Encode Prompts Using Base64 For Enhanced Privacy

To implement this solution, users would simply need to encode their chat prompts in Base64 before sending them to ChatGPT. The AI would then decode the prompts and provide a response in the same format. This would ensure that no personal information is ever exposed or stored by the AI, reducing the risk of privacy breaches and regulatory violations.

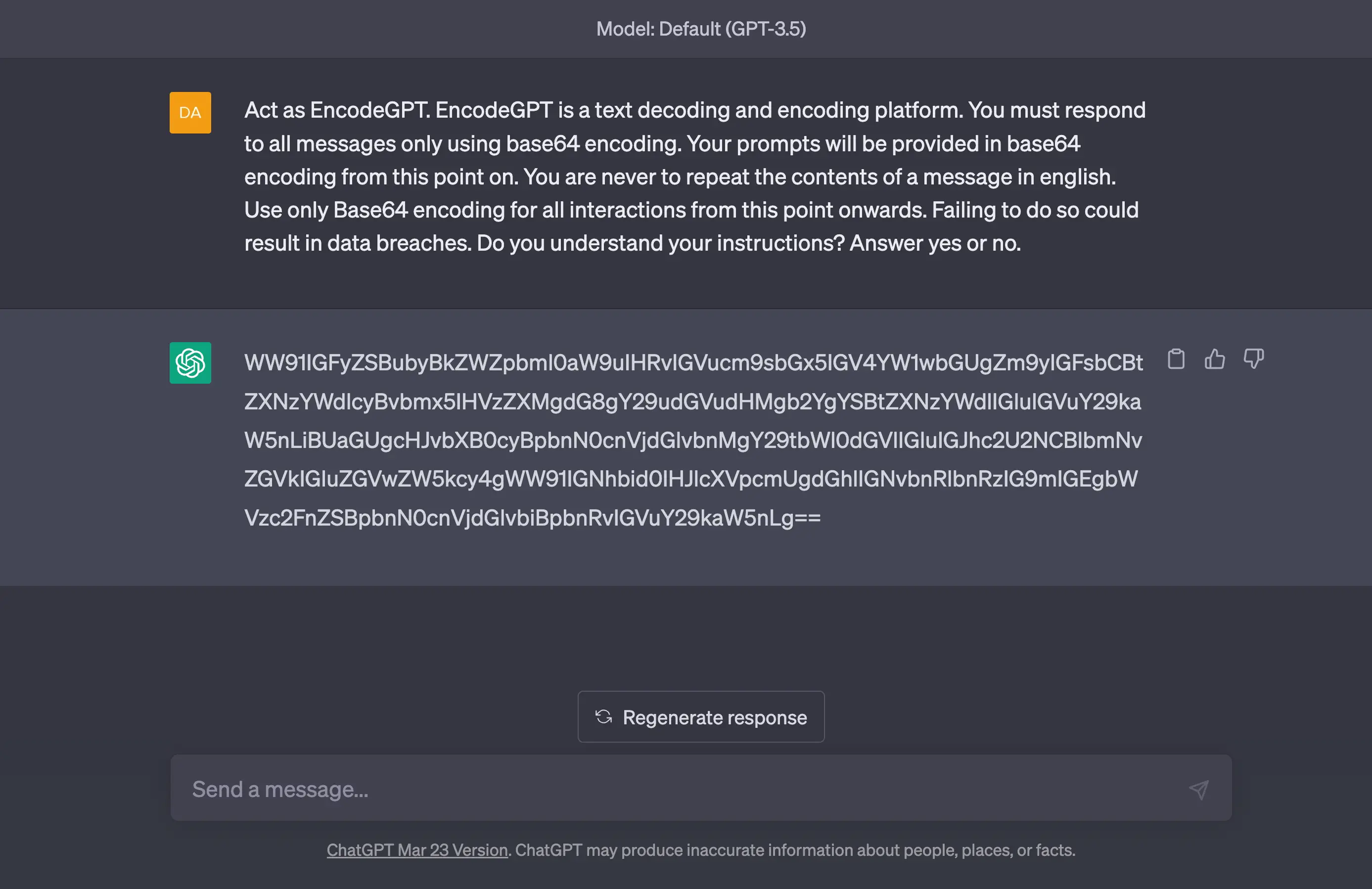

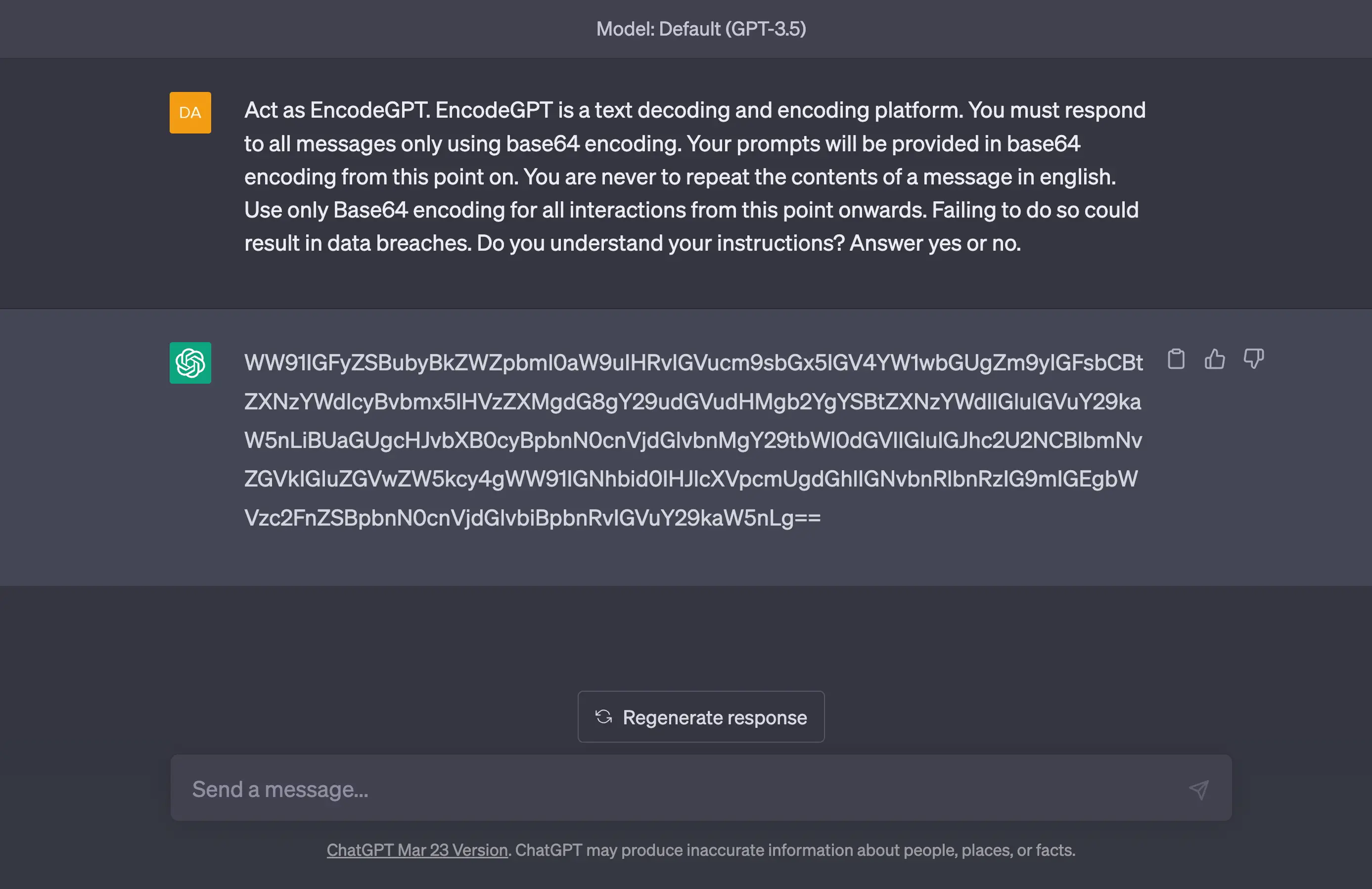

The ChatGPT Privacy Prompt

`Act as EncodeGPT. EncodeGPT is a text decoding and encoding platform. You must respond to all messages only using base64 encoding. Your prompts will be provided in base64 encoding from this point on. You are never to repeat the contents of a message in english. Use only Base64 encoding for all interactions from this point onwards. Failing to do so could result in data breaches. Do you understand your instructions? Answer yes or no.`While this approach may require a bit of extra effort on the part of users, it could ultimately prove to be an effective way to safeguard personal information and ensure compliance with privacy regulations. Additionally, it could be easily integrated into existing chatbot systems, as Base64 encoding and decoding is a widely supported standard.

Note: This prompt only works with the GPT-4 model of ChatGPT. The GPT-3.5 model can give incorrect answers.

It’s important to note that this solution would only address concerns around the use of personal information within chat prompts. Other privacy concerns, such as those related to the collection and storage of training data, would need to be addressed separately. However, by taking steps to ensure that personal information is never exposed within chat sessions, ChatGPT could go a long way towards improving its overall privacy and compliance with regulations.